Differential geometry is the field of mathematics dealing with the geometry of surfaces, such as planes, curved surfaces, and also higher dimensional curved spaces. It’s used extensively in physics to deal with the space curvatures caused by gravity in the theory of general relativity, and also has applications in several other fields of science and engineering. In its simplest form, differential geometry deals with the shapes and mathematical properties of what we intuitively think of as “surfaces” – for example, a sheet of paper, a draped cloth, the surface of a ball, or the curved surface shape of something like a saddle.

One of the most important properties of a surface is the curvature, or more specifically the Gaussian curvature. Intuitively, this is just a measure of how curved the surface is, although in some cases the answer isn’t quite as intuitive as you might think. Imagine a flat surface, like a polished table top, or a completely flat, unbent sheet of paper. Straightforwardly enough, a flat surface like this has a Gaussian curvature value of zero.

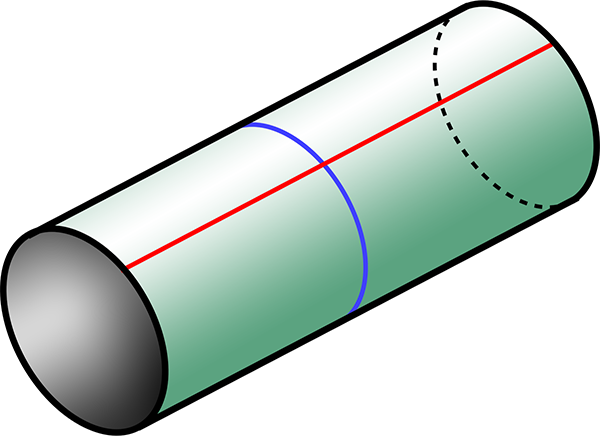

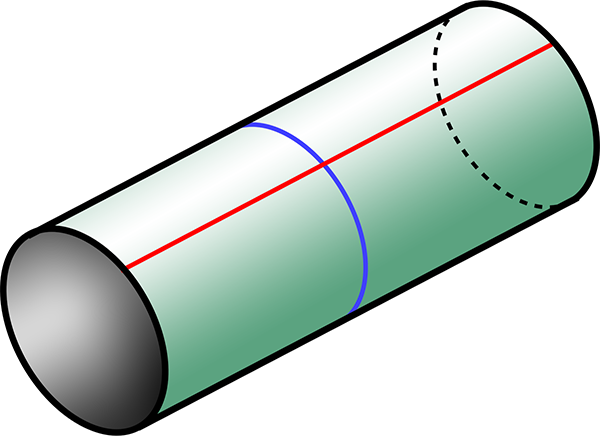

One of the most important results in differential geometry is the Theorema Egregium, which is Latin for “remarkable theorem”, proven by the 19th century German mathematician and physicist Carl Friedrich Gauss. The Theorema Egregium states that the Gaussian curvature of a surface does not change if the surface is bent without stretching it. So let’s take our flat sheet of paper and roll it up into a cylinder – we can do this without stretching or crumpling the paper. The resulting cylinder has the same curvature as the flat sheet, namely zero.

That might sound a bit strange, but it’s a result of the way that the Gaussian curvature of a surface is defined. A two-dimensional surface has two different directions that it can be curved in, and the two greatest amounts of curvature in different directions are called the principal curvatures. These measure how the surface bends by different amounts in different directions. Imagine drawing a straight line on a sheet of flat paper – the principal curvature in that direction is zero because the paper is flat. Now draw a line perpendicular to the first one – the principal curvature in that direction is also zero. The Gaussian curvature of the surface is the product of the two principal curvatures – in this case zero times zero.

Now if we roll the paper into a cylinder, we can draw a line around the circular part, creating a circle like a hoop around a barrel. This is the maximum curvature of the cylinder, so one of the principal curvatures, and is non-zero. It’s defined as a positive number equal to 1 divided by the radius r of the cylinder. As the radius gets smaller, this principal curvature 1/r gets bigger. But a cylinder has a second principal curvature, perpendicular to the first one. This is along a line running the length of the cylinder parallel to the axis, and this line is perfectly straight – not curved at all. So it has a principal curvature of zero. And the Gaussian curvature of the cylindrical surface is the product (1/r)×0 = 0.

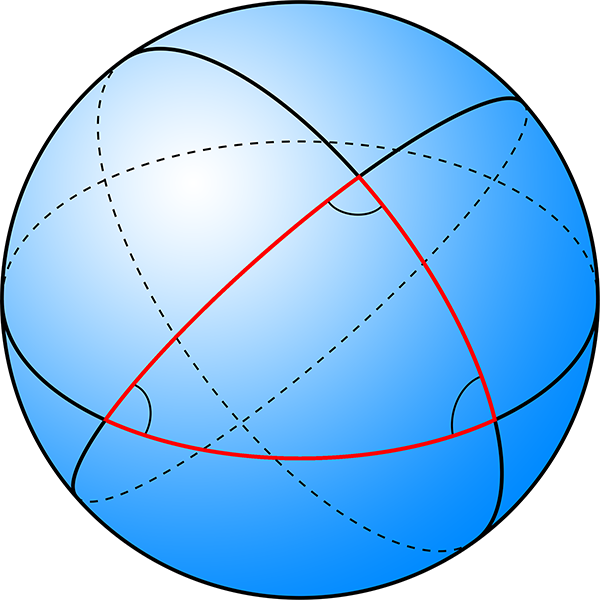

A cylinder, as could be formed by rolling a sheet of paper. The blue line is a line of maximum curvature, wrapped around the cylinder. The red line, along the cylinder perpendicular to the blue line, has zero curvature.

So what surfaces have non-zero Gaussian curvature? By the Theorema Egregium, they must be surfaces that you can’t bend a sheet of paper into without stretching it. An example is the surface of a sphere. If you try to wrap a sheet of paper smoothly around a sphere, you can’t do it without stretching, scrunching, or tearing the paper. If we draw a line around a sphere (like an equator), that’s one principal curvature, equal to 1/r, similar to the cylinder, where r is now the radius of the sphere. A line perpendicular to that (like a line of longitude), also has the same same principal curvature due to the symmetry of the sphere, 1/r. The Gaussian curvature of a sphere is then (1/r)×(1/r) = 1/r2.

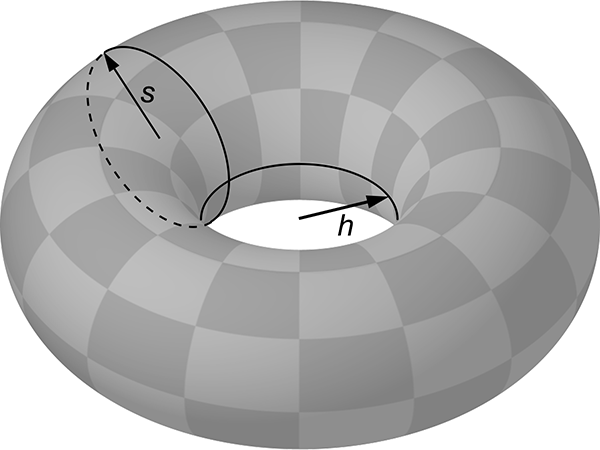

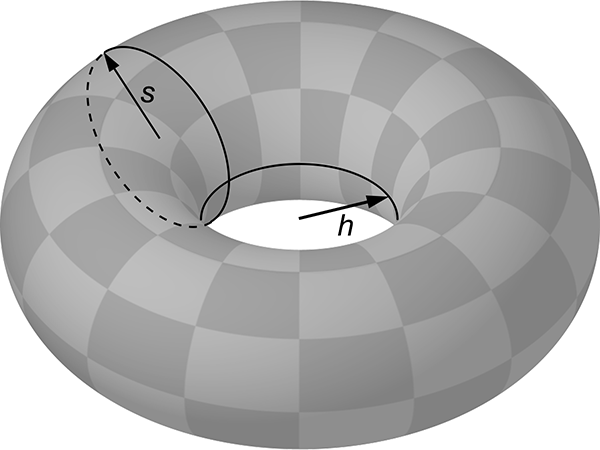

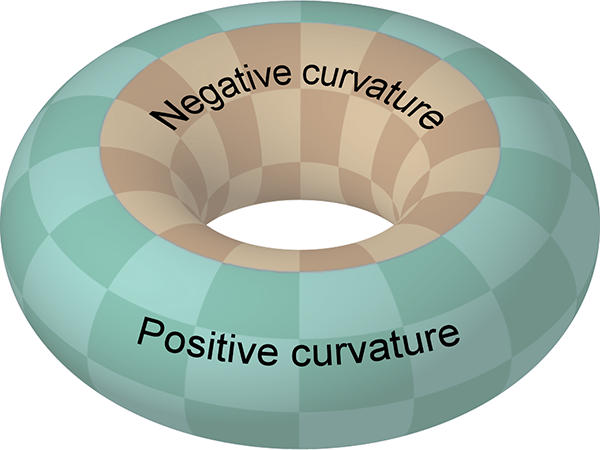

And then there are surfaces with a saddle shape, bending upwards in one direction and downwards in a perpendicular direction. An example is the surface on the inside of the hole in a torus (or doughnut shape). If you imagine standing on the surface here, in one direction it curves downwards with a radius s equal to that of the solid part of the torus, while in the perpendicular direction the surface curves upwards with radius h, the radius of the hole. Curving upwards is defined as a negative curvature, so the two principal curvatures are 1/s and -1/h, and the Gaussian curvature here is the product, -1/sh.

A torus, showing the solid radius

s and the radius of the hole

h. The point where the two circles intersect has Gaussian curvature -1/

sh. (Image modified from

public domain image from Wikimedia Commons.)

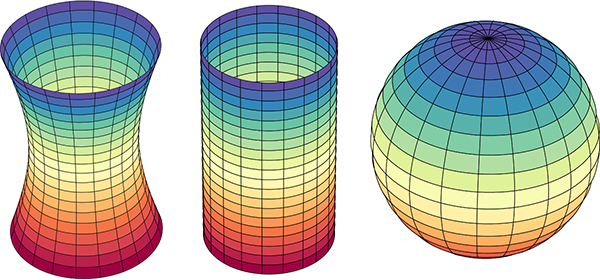

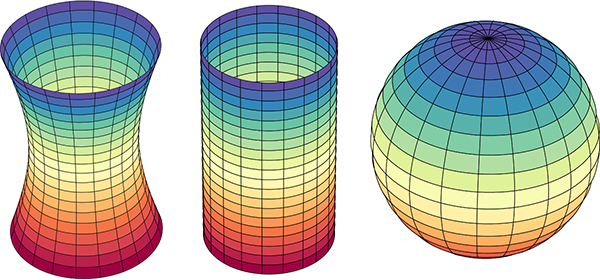

Here are examples of surfaces with negative, zero, and positive curvature, respectively a hyperboloid, cylinder, and sphere:

Illustration of surfaces with negative, zero, and positive Gaussian curvature: respectively a hyperboloid, cylinder, and sphere. (Image modified from

public domain image from Wikimedia Commons.)

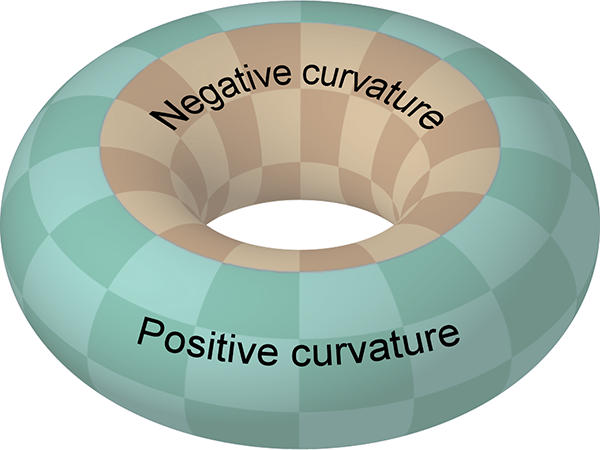

Another way to think about Gaussian curvature is to imagine wrapping a sheet of paper snugly onto the surface. If you can do it without stretching or tearing the paper (such as a cylinder), the curvature is zero. If you have to scrunch the paper up (like wrapping a sphere), the curvature is positive. If you have to stretch/tear the paper (like the saddle or hyperboloid), the curvature is negative. It’s also important to realise that the Gaussian curvature doesn’t need to be the same everywhere – it can vary across the surface. It’s zero at all points on a cylinder, and 1/r2 at all points on a sphere, but on a torus the curvature is negative on the inside of the hole and positive on the outside, with lines of zero curvature running around the top and bottom.

Diagram of a torus, showing regions of positive (green) and negative (orange) Gaussian curvature. The boundary between the regions has zero curvature.

A property of two-dimensional curvature is that it affects the geometry of two-dimensional shapes on the surface. A surface with zero Gaussian curvature we call Euclidean, and the Euclidean geometry matches the familiar geometry we learn at primary and secondary school. This incudes all those properties of circles and triangles and parallel lines that you learnt. In particular, let’s talk about triangles. Triangles have three internal angles and, as we learnt in school, if you add up the sizes of the angles you get 180°. In the angular unit known as radians, 180° is equal to π radians. (To convert from degrees to radians, divide by 180 and multiply by π.)

So, in a Euclidean geometry, the angle sum of a triangle equals π radians. This is the case for triangles drawn on a flat sheet of paper, and it also holds if you wrap the paper around a cylinder. The triangle bends around the cylinder in the positive principal curvature direction, but its Gaussian curvature remains zero (because of the Theorema Egregium). And if you measure the angles and add them up, they still add up to π radians (i.e. 180°).

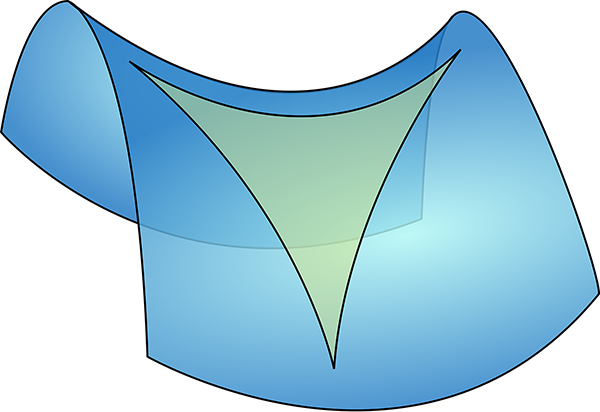

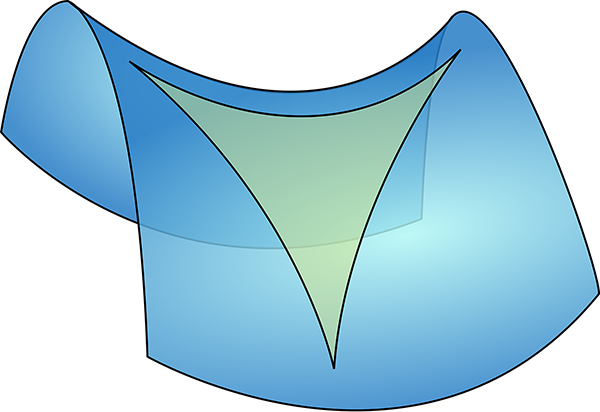

However if you draw a triangle on a surface of negative curvature, the lines are locally straight but from a three-dimensional point of view they are bowed inwards by the curvature of the surface, pinching the angles to make them smaller.

A saddle shaped surface with negative curvature, with a triangle drawn on it. The angles become pinched in and smaller. (Image modified from

public domain image from Wikimedia Commons.)

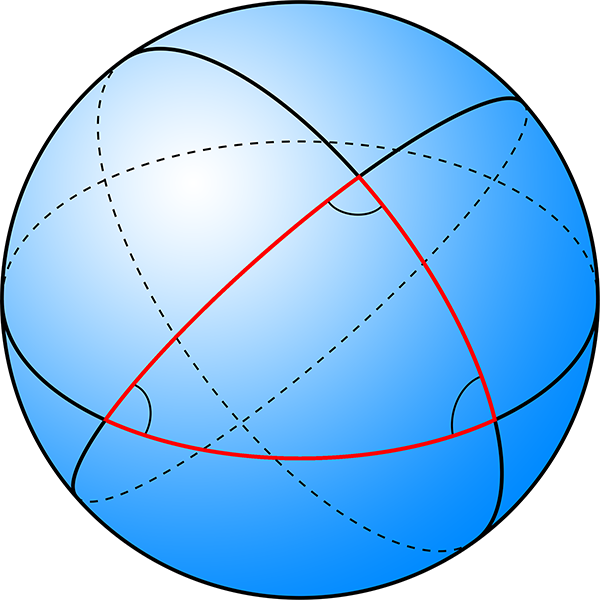

On the other hand, if you draw a triangle on the surface of a sphere, which has positive curvature, the lines seem to bow outwards, making the angles larger.

A spherical surface with positive curvature, with a triangle drawn on it. The angles become bulged out and larger. (Image modified from

public domain image from Wikimedia Commons.)

Now, here’s the cool thing. On a negative curvature surface, the angle sum of a triangle is less than π radians, while on a positive curvature surface it’s greater than π radians. Imagine a really small triangle on either of these surfaces. Over a very small area, the curvature is not so evident, and the angle sum is only different from π radians by a small amount. But for a larger triangle, the curvature makes a bigger difference, and the angle sum differs from π radians by a larger amount. It turns out there’s a mathematical relationship between the Gaussian curvature of the surface, the size of the triangle, and the amount by which the angle sum differs from π radians:

The angle sum of a triangle = π radians + the integral of the Gaussian curvature over the area of the triangle. [Equation 1]

If you’re not familiar with calculus, the integral part basically means you take small patches of area within the triangle, multiply the Gaussian curvature in the patch by the area of the patch and add them all up. If the Gaussian curvature is constant (such as for a sphere), the integral is just equal to the curvature times the area of the triangle.

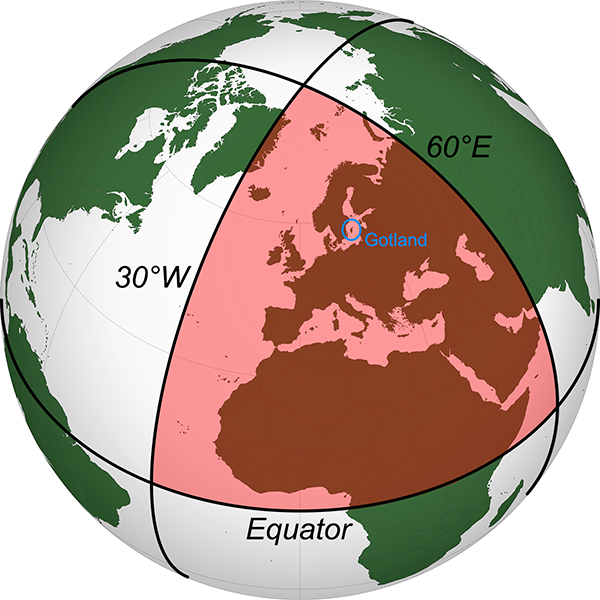

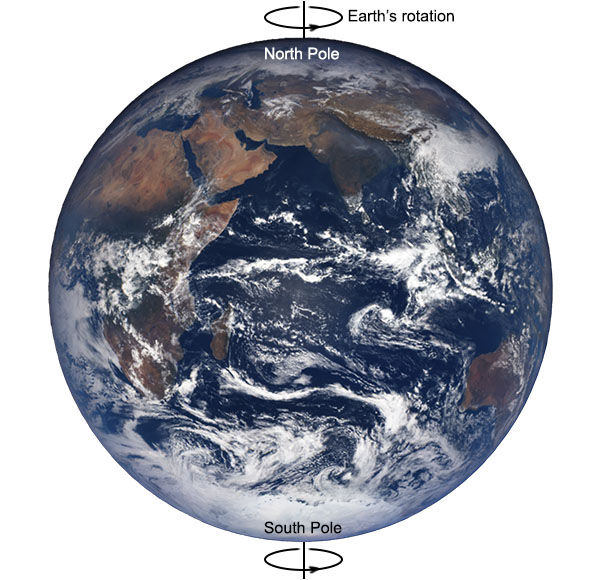

To take a concrete example, imagine a sphere of radius one unit. The surface area of the sphere is 4π square units. Now let’s draw a triangle on the sphere. If we imagine the sphere with lines of latitude and longitude like the Earth, we’ll take the equator as one of our triangle sides, and two lines of longitude running from the North Pole to the equator, 90° apart. The angle between the equator and any line of longitude is 90° (π/2 radians), and the angle at the North Pole between our chosen two lines of longitude is also 90° (by construction). So the angle sum of this triangle is 3π/2 radians, which is π/2 radians greater than π radians.

From equation 1, this means that the integral of the Gaussian curvature over the triangle equals π/2. The area of the triangle is one eighth the surface area of the whole sphere = 4π/8 = π/2 square units. The Gaussian curvature of a sphere is constant, so curvature×(π/2 square units) = π/2, which means the curvature is equal to 1. We said the sphere has a radius of one unit, and Gaussian curvature of a sphere is 1/r2, so the curvature is just 1. It all works out!

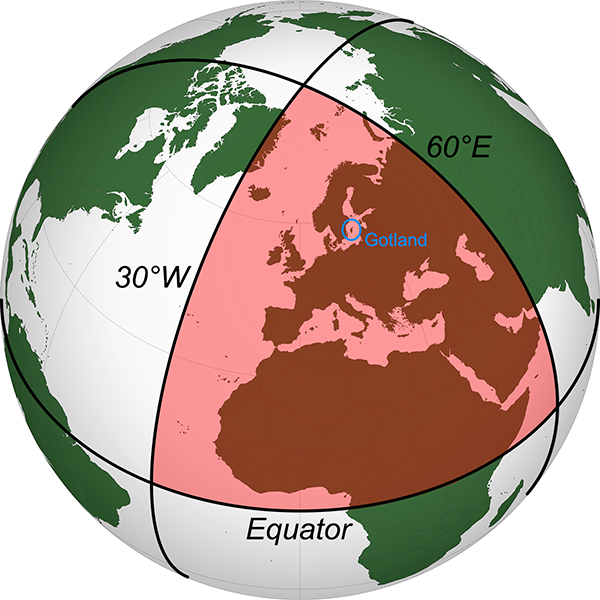

Now imagine we’re looking at such a triangle on the Earth itself. Our edges are the equator, and we’ll take the lines of longitude 30° west (running through eastern Greenland) and 60° east (through Russia and Kazakhstan, among other places). The area of this triangle, if we measured it, turns out to be 63.8 million square kilometres.

A triangle on Earth, with each angle equal to 90°. (Image modified from

public domain image from Wikimedia Commons.)

Applying equation 1:

Angle sum of triangle = π radians + integral of Gaussian curvature over the area of the triangle

3π/2 radians = π radians + Gaussian curvature × 63.8 million square kilometres

π/2 radians = Gaussian curvature × 63.8 million square kilometres

Gaussian curvature = (π/2)/63.8×106

1/r2 = (π/2)/63.8×106

r2 = 63.8×106/(π/2)

r = √[63.8×106/(π/2)]

r = 6371 kilometres

This is the radius of the Earth. And it’s exactly right. So simply by measuring the angles of a triangle drawn on the surface of the Earth, and the area within that triangle, we can show that the surface of the Earth is not flat, but curved, and we can determine the radius of the Earth.

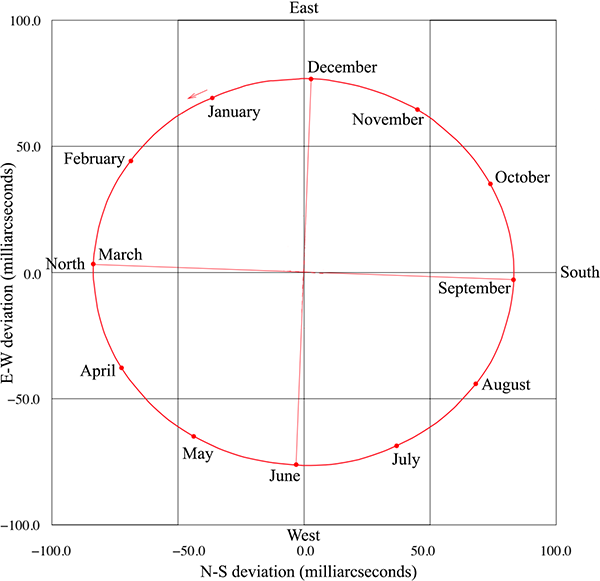

Obviously I haven’t gone out and measured such a triangle in practice. It would take expensive surveying gear and an extensive travel budget, but in principle you can certainly do it. Because the effect of the curvature depends on the size of the triangle, you need to survey a large enough area to detect the Earth’s curvature. How large?

I did some searching for angular accuracy of large scale surveys, but didn’t find anything particularly convincing. As a first estimate, I guessed conservatively that you might be able to measure the angles of a very large triangle to an accuracy of a tenth of a degree. With three corners, this makes the necessary deviation of the angle sum from π equal to 0.005 radians. The necessary area to see the effect of curvature is this number times the square of Earth’s radius, which gives 203,000 square kilometres, about the area of Belarus, or Kyrgyzstan. If you surveyed a triangle that big, measuring the area accurately and the angles to within 0.1° accuracy, you could experimentally verify that the Earth was curved, not flat.

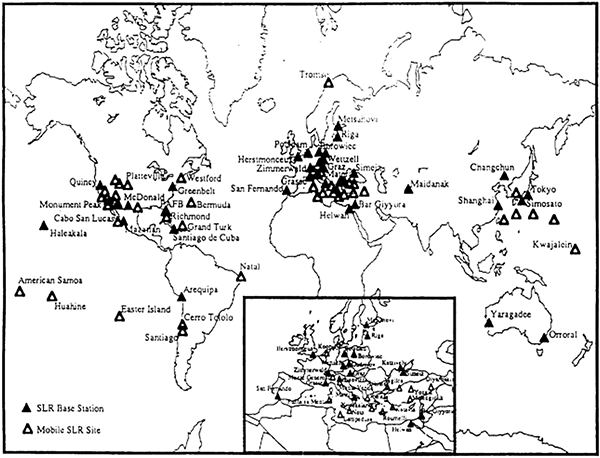

A reference on the accuracy of Global Navigation Satellite Systems used for geodetic surveying [1], gives an angular accuracy better than my guess, in the order of 2 minutes of arc (i.e. 1/30°) for this method. This gives us a necessary area of 20,300 square kilometres, about the area of Slovenia or Israel. Another reference on laser scanners used in surveying [2] gives an angular resolution of 3 mm over a range of 100 m, equivalent to 6 seconds of arc. If we can survey the angles of a triangle this accurately, we only need to measure an area of 1220 square kilometres, which is smaller than the Indian Ocean island nation of Comoros, and about the size of Gotland, Sweden’s largest island (circled in blue in the above figure).

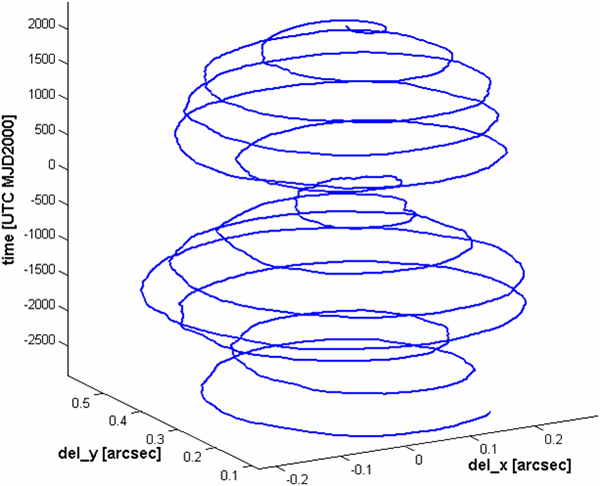

Interestingly, Gauss was likely inspired to develop a mathematical treatment of curvature by his experience as a surveyor. In the 1820s, he was tasked with surveying the Kingdom of Hanover (now part of Germany). To check the calibration of his equipment, he surveyed a large triangle with corners on the tops of the mountains Brocken, Hoher Hagen, and Großer Inselsberg, encompassing an area of 3000 km2. Each mountaintop had direct line of sight to the others, so this was not actually a survey of a curved triangle along the surface of the Earth, but rather a flat triangle through 3D space above the surface of the Earth. Gauss considered this a validation check on the accuracy of the equipment, rather than a test to see if the Earth was curved. He measured the angles and added them up, finding the sum to be 180° to within his measurement uncertainty. Although this was not the curvature experiment described above, Gauss later drew on his surveying experience to investigate the properties of curved surfaces.

This concludes the “Earth is a Globe” portion of this entry, but there are two other cool applications of differential geometry:

Firstly, curvature of this type applies not only to two-dimensional surfaces, but also to three-dimensional space. It’s possible that the 3D space we live in has a non-zero curvature. This sort of curvature is tied up in general relativity, gravity, and the expansion of the universe. We know the curvature of space is very close to zero, but not if it’s exactly zero – it may be slightly positive or negative. To measure the curvature of space directly, all we need to do is measure the angles of a large enough triangle. In this case, large enough means millions of light years. We can’t send surveyors out that far, but imagine if we contacted two alien civilisations by radio. It would take millions of years to coordinate, but we could ask them to measure the angles between our sun and the sun of the other civilisation at some predetermined time, and we could combine it with our own measurement, to determine the angle sum of this enormous triangle. If it doesn’t equal π radians, we’d have a direct measurement of the curvature of the universe.

Secondly, and perhaps more practically, the Theorema Egregium helps us eat pizza. If you take a long slice of pizza (and the base is not thick/crispy enough to be rigid), the tip can flop down messily.

A slice of pizza flopping along its length. Danger of making a mess!

Differential geometry to the rescue! The slice begins flat, so has zero Gaussian curvature. It can bend in one direction, flopping down and making a mess. But if we fold the slice by pushing the ends of the crust upwards and together, this creates a non-zero principal curvature across the slice. By the Theorema Egregium, the Gaussian curvature (the product of the principal curvatures) must remain zero, so the principal curvature in the perpendicular direction along the slice is now fixed at zero, and the slice cannot flop down any more!

A slice of pizza curved perpendicular to the length can no longer flop. Danger averted, thanks to differential geometry!

References:

[1] Correa-Muños, N. A., Cerón-Calderón, L. A. 2018. “Precision and accuracy of the static GNSS method for surveying networks used in Civil Engineering”. Ingeniería e Investigación, 38(1), p. 52-59, 2018. https://doi.org/10.15446/ing.investig.v38n1.64543

[2] Fröhlich, C. Mettenleiter, M. “Terrestrial laser scanning—new perspectives in 3D surveying”. International archives of photogrammetry, remote sensing and spatial information sciences, 36(8), p.W2, 2004. https://www.semanticscholar.org/paper/TERRESTRIAL-LASER-SCANNING-–-NEW-PERSPECTIVES-IN-3-Froehlich-Mettenleiter/4e117d837e43da8b9e281aec1ce9a8625430b6c3